Chainsail, Tweag’s web service for sampling multimodal probability distributions, is now open-source and awaits contributions and new uses from the community!

Chainsail was released in August 2022 as a beta version in order to collect initial feedback and survey potential use cases and directions for future development. If you’d like to learn more about Chainsail, have a look at the announcement blog post, a detailed analysis of soft k-means clustering using Chainsail or our walkthrough video.

After having presented Chainsail to the probabilistic programming communities and scientists, the feedback we got most frequently was: when is Chainsail going to be open-source?

The fact that the Chainsail source code was not publicly accessible seems to have hindered engagement with the target communities of computational statisticians, scientists and probabilistic programmers. Tweag is taking that feedback seriously and announces today the open-sourcing of all of Chainsail’s code.

To help future users and contributors find their way around the project, this blog post will give an overview of the service architecture, point out pieces of code that might be of particular interest to certain groups of users, describe Chainsail deployment options and lists ideas and issues for which Tweag would particularly welcome contributions.

Service architecture

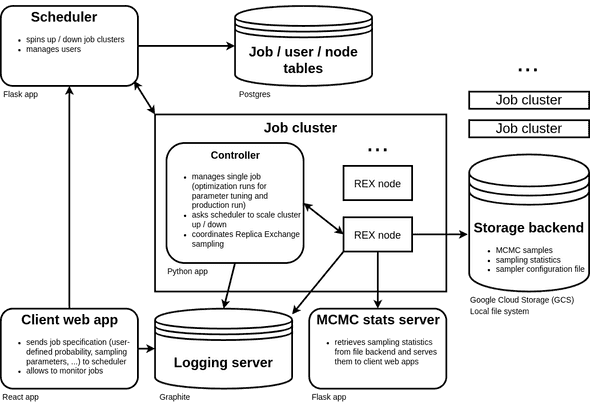

Chainsail is a complex system with multiple, interacting components, but before I describe these components in detail, let’s look at an overview of the Chainsail service architecture:

Let’s quickly review what happens during a typical Chainsail run:

- On the frontend, a user submits their probability distribution and provides a couple of parameters. This information is sent to the job scheduler application that will create database entries representing the newly submitted sampling job. The job scheduler then commences processing of the job by launching a controller instance.

- The controller is the core of a Chainsail job: it orchestrates automatic inverse temperature schedule tuning and Replica Exchange sampling. Note that, despite the similar name, the inverse temperature schedule is completely unrelated to the job scheduler — unfortunately, the former is standard parlance in tempering algorithms. The controller will also ask the job scheduler to scale computing resources up or down, depending on the number of replicas the current simulation run requires.

- The controller, job scheduler and client furthermore communicate with a couple of auxiliary components for logging, sampling statistics, and storing data and configuration.

Now that you know what you’ve signed up for, I will explain the function of these components inside-out: first, the algorithmic core of the service, and then the components that serve the core functionality to the user and allow them to monitor it.

Controller

This is the algorithmic heart of the Chainsail web service. Once a controller is started, either manually when developing, or by the job scheduler when the full service is deployed, the controller does the following:

- Sets up an initial inverse temperature schedule that follows a geometric progression.

- Until the temperature schedule stabilizes, iterates the following recipe for a schedule optimization simulation:

- Ask the job scheduler to provision a number of nodes equivalent to the number of temperatures;

- Draw sensible initial states based on previous optimization iterations, or, if this is the first optimization run, use the initial states the user provides;

- Interpolate HMC step size from timesteps used in previous optimization iterations, or, if this is the first optimization run, start out with a default stepsize;

- Run a Replica Exchange simulation on a set of nodes, during which it automatically adapts HMC timesteps for a while;

- Calculate the density of states (DOS).

- Performs a final production run.

The controller comes in the form of a Python application, the source code of which can be found in app/controller.

Note that the controller can be run independently, without any other Chainsail component.

Density of states estimator

For schedule optimization and for drawing initial Replica Exchange states based on previous iterations, the controller requires an estimate of the density of states (DOS), which is briefly explained in this blog post and which might be discussed in more detail in a forthcoming publication. There are several ways to estimate the density of states, but Chainsail implements a binless version of the Weighted Histogram Analysis Method (WHAM) that is widely used in biomolecular simulation.

This WHAM implementation is completely general and might find interesting uses outside of Chainsail, e.g. in conjunction with other tempering methods. It’s also interesting to note that the DOS is a very useful value to have for a multitude of analyses; for example, it can be used to easily estimate the normalization constant of a probability distribution, which means that, in the case of a Bayesian model, the model evidence can be calculated using the DOS.

The code can be found in lib/common/schedule_estimation.

Automatic schedule tuning

As described above, the controller automatically tunes the inverse parameter schedule with an iterative procedure. In each iteration, it tries to find a sequence of inverse temperatures such that the acceptance rate predicted from it matches the target acceptance rate desired by the user. Predicting the acceptance rate is the main reason the controller calculates a DOS estimate at each iteration.

The automatic schedule tuning depends only on a DOS estimate and is thus easily reuseable for Replica Exchange implementations other than Chainsail, for example in TensorFlow Probability or ptemcee.

The code is provided in the same Python package as the DOS estimator, namely lib/common/schedule_estimation.

For the curious reader with the necessary mathematical and physics background, a technical note explains the DOS-based schedule tuning and drawing of appropriate initial Replica Exchange states.

MPI-based Replica Exchange runner

Once a schedule, initial states and timesteps are determined, the controller launches a Replica Exchange simulation implemented in a runner.

Chainsail currently implements a single runner based on rexfw, a Message Passing Interface (MPI)-based Replica Exchange implementation.

The Chainsail-specific runner code is thus only a thin wrapper around the (very general) rexfw library and can be used both on a single machine and in a Kubernetes cluster.

As Replica Exchange is a bit more widely known in scientific circles and the rexfw library has its origin in scientific research, an interesting option could be to run rexfw and even the full controller on HPC clusters.

The rexfw-based runner can be found in lib/runners/rexfw.

Job scheduler

The job scheduler is a Flask-based Python app that serves multiple purposes:

- It receives job creation, launch, and stop requests from the user;

- It keeps track of the job state;

- It scales the resources (nodes) available to the controller up or down;

- And it compresses sampling results into a

.zipfile.

It keeps track of users, jobs, and nodes in tables of a PostgreSQL database. The job scheduler currently knows two node types, namely a (now deprecated) VM node in which each replica is run on a separate VM, and a Kubernetes-based implementation in which a node is represented by a pod.

The job scheduler code is located in app/scheduler.

Client web app

While a user can interact with a Chainsail job scheduler via the HTTP APIs of the job scheduler and other components, the most comfortable way to do so is via the frontend web app. It is written in React and comprises the following parts:

- A landing page that presents Chainsail to the user and shows links to essential resources, such as blog posts and the

chainsail-resourcesrepository; - A job submission form that allows a user to set (and documents) the most crucial parameters and that allows the upload of a

.zipfile with the probability definition; - A job table that shows all jobs a user has created and possibly launched, with their current status;

- A dashboard that allows the user to follow a sampling job’s process, monitor Replica Exchange acceptance rates and convergence and that shows Chainsail logging output.

If mandatory user authentication via Firebase is enabled, only the landing page is accessible without the user being logged in. For every other page, the user will have to sign in with their Gmail address, and a token is generated that is sent along to the job scheduler, which then stores it in a user database table.

The client web app can be found in app/client.

Other components

The above really are the key components of the Chainsail service, but there are a couple of other applications and services that are necessary to make full Chainsail deployments run. Among them are a small Flask application that serves MCMC sampling statistics to the frontend application, a Celery task queue to which job scheduler requests are offloaded, and a Docker image that runs a gRPC server providing an encapsulated interface to the user-provided code.

Deploying Chainsail

As you have now seen, Chainsail is a big system, but rest assured, deploying it and testing it out isn’t that hard! You currently have three ways to deploy (a subset of) Chainsail:

-

Run the controller and thus Chainsail’s core algorithm on a single machine:

This is as easy as using the provided Poetry packaging to get a Python shell with all required dependencies, add the dependencies your probability distribution code needs, set parameters in a JSON configuration file and run the controller using a simple command. Note that this currently requires an OpenMPI (or another MPI) installation.

-

Run the full Chainsail service locally using Minikube:

Minikube provides a local environment to run Kubernetes applications. Given a running Minikube installation, Chainsail can be very easily deployed using Terraform configuration and Helm charts. All it takes are a couple of commands, which are described in our deployment documentation.

-

Run the full Chainsail service on Google Cloud:

Finally, the complete Chainsail web service can be deployed to the Google Cloud Platform, thanks again to Terraform configuration and Helm charts. Note, though, that this requires setting quite a few configuration options, such as regions, VM machine types, etc., but defaults are given. These configuration options are currently not documented, so you would have to browse the relevant Terraform and Helm configuration files yourself.

Future work

Chainsail in its current state is still a proof of concept, but Tweag is convinced that it could become a useful and widely applicable tool for everyone who has to deal with multimodal probability distributions. To this end, a couple of interesting possibilities for future developments include:

- Replacing the very simple HMC implementation with a state-of-the-art NUTS sampler, e.g. via the BlackJAX library (#423).

- Replace the clunky and insanely slow Stan support (that currently works via requests to a

httpstaninstance) with a wrapper aroundBridgeStan(#424). - Improve the inverse temperature schedule optimization to deal with “phase transitions”, meaning distributions which exhibit sudden drops to zero Replica Exchange acceptance rate with increasing inverse temperature (#425).

- Speed up and improve candidate schedule calculation by having an adaptive inverse temperature decrement (#426).

- Implement a lightweight local runner for easier testing and debugging (#427).

- Write a dedicated interface to calculate the model evidence and other quantities from the DOS, possibly in a separate Python package.

While Chainsail has a large number of unit tests and a couple of functional tests, it would still immensely profit from improved testing and a continuous integration (CI) setup.

For example, Docker containers could be built automatically by CI and an automatic end-to-end integration test of the service could be performed using the Minikube deployment option.

Building Docker images currently happens via Dockerfiles and the docker build command, but packaging of Chainsail components via Nix and its dockerTools library is currently under development.

Conclusion

Now that Chainsail is open-source, I hope that this brief tour of Chainsail’s key components piques interest in the project. Be it code reuse in other projects, any kind of contribution to the code base, or ideas for interesting problems Chainsail could solve, Tweag welcomes feedback and engagement from probabilistic programmers, statisticians, scientists and everyone else who is as excited about this project as we are!

Behind the scenes

Simeon is a theoretical physicist who has undergone several transformations. A journey through Markov chain Monte Carlo methods, computational structural biology, a wet lab and renowned research institutions in Germany, Paris and New York finally led him back to Paris and to Tweag, where he is currently leading projects for a major pharma company. During pre-meeting smalltalk, ask him about rock climbing, Argentinian tango or playing the piano!

If you enjoyed this article, you might be interested in joining the Tweag team.